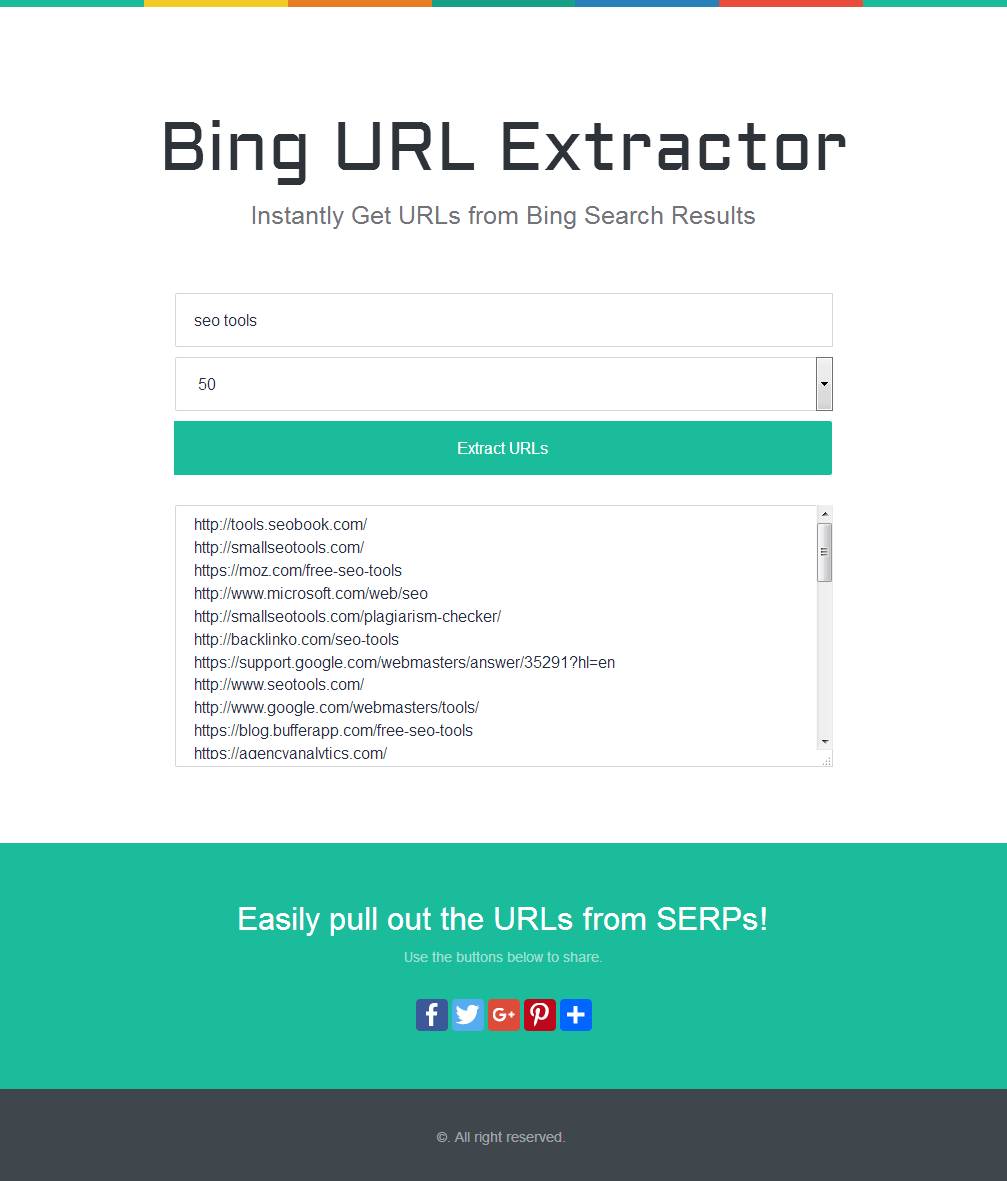

extract information from web pages can be based on the UNIX grep command.

No need to worry about different values per sex or ethnicity the total values will do. Web scraping, web harvesting, or web data extraction is data scraping used for extracting. Then type the achievement numbers for reading, writing and mathematics in this Google Spreadsheet. The following link randomly chooses a school, click on the “National Standards” tab and open the PDF file. It would be great if you can help me to get the information from the reports. Pdf.name <- paste(download.folder, 'school_', school, '.pdf', sep = '')ĭownload.file(pdf.url, pdf.name, method = 'auto', quiet = FALSE, mode = "w",ĬacheOK = TRUE, extra = getOption(""))

Of course life would be a lot simpler if the Ministry of Education made the information available in a usable form for analysis. I download the page, look for the name of the PDF file and then download the PDF file, which is named school_schoolnumber.pdf. Once I can identify all the schools with missing information I just loop over the list, using the fact that all URL for the school pages start with the same suffix. I’d like to keep a copy of the PDF reports for all the schools for which I do not have performance information, so I decided to write an R script to download just over 1,000 PDF files. In the page for a given school there may be link to a PDF file with the information on standards sent by the school to the Ministry of Education. I have written a few posts discussing descriptive analyses of evaluation of National Standards for New Zealand primary schools.The data for roughly half of the schools was made available by the media, but the full version of the dataset is provided in a single-school basis.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed